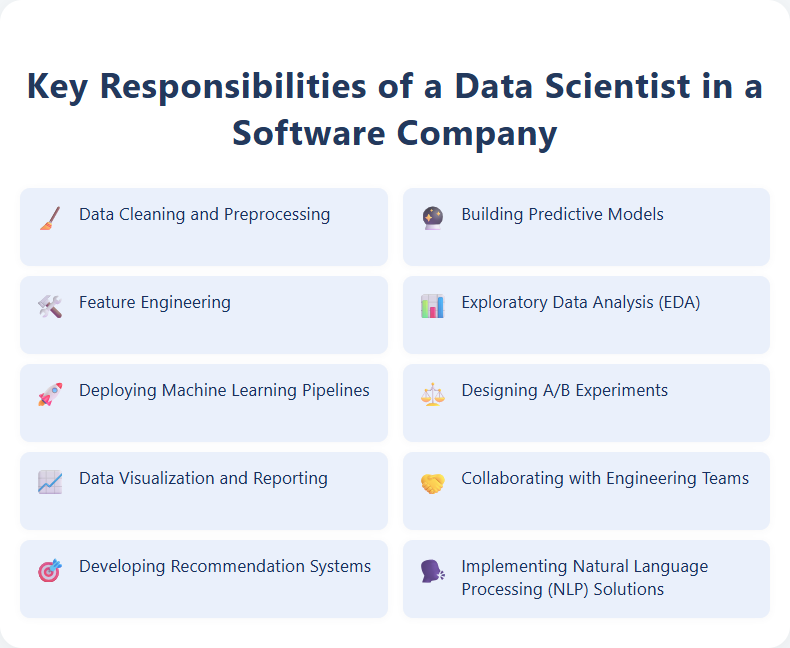

A Data Scientist in a software company analyzes large datasets to extract actionable insights that drive product development and business strategies. They build predictive models and algorithms to enhance user experience and optimize software performance. Their work supports decision-making processes by turning complex data into clear, data-driven recommendations.

Data Cleaning and Preprocessing

Data cleaning and preprocessing involve identifying and correcting errors, inconsistencies, and missing values in datasets to ensure high-quality and reliable data for analysis. Mastery of tools like Python (Pandas, NumPy) or R, along with expertise in techniques such as normalization, transformation, and feature engineering, is essential for optimizing dataset accuracy. Strong attention to detail and problem-solving skills support the creation of clean, structured data that drives effective machine learning models and business intelligence insights.

Building Predictive Models

Building predictive models involves analyzing large datasets using machine learning algorithms to forecast future trends and behaviors. Expertise in programming languages such as Python or R, along with experience in statistical analysis and data preprocessing, is essential to develop accurate and efficient models. Collaboration with data scientists and domain experts ensures the creation of robust predictive models that drive informed business decisions and strategic planning.

Feature Engineering

Feature Engineering involves transforming raw data into meaningful features that enhance the performance of machine learning models. Experts in this field utilize statistical techniques, domain knowledge, and programming skills to extract, select, and create predictive variables that improve model accuracy and interpretability. Proficiency in Python, SQL, and data visualization tools is essential to identify patterns and optimize datasets for advanced analytics projects.

Exploratory Data Analysis (EDA)

Exploratory Data Analysis (EDA) involves systematically examining datasets using statistical graphics and descriptive statistics to uncover patterns, detect anomalies, and test hypotheses. Proficiency in tools such as Python (Pandas, Matplotlib, Seaborn) or R and strong skills in data cleaning and preprocessing are essential for effective EDA. Mastery of data visualization techniques and the ability to translate insights into actionable recommendations significantly enhance decision-making processes.

Deploying Machine Learning Pipelines

Deploying Machine Learning Pipelines involves designing, building, and maintaining scalable workflows that automate data preprocessing, model training, evaluation, and deployment into production environments. Expertise in tools such as Kubernetes, Docker, Apache Airflow, and cloud platforms like AWS, GCP, or Azure is essential for efficient pipeline orchestration and management. Candidates should focus on optimizing pipeline performance, ensuring reproducibility, and implementing monitoring strategies to maintain model accuracy and reliability over time.

Designing A/B Experiments

Designing A/B experiments involves creating controlled tests to compare two versions of a webpage, app feature, or marketing campaign to determine which performs better based on key metrics like conversion rates or user engagement. The role requires expertise in statistical analysis, hypothesis formulation, and data interpretation to ensure valid and actionable results. Candidates should be skilled in using experimentation platforms such as Optimizely or Google Optimize, and have a strong understanding of experimental design principles to optimize user experience and business outcomes.

Data Visualization and Reporting

Expertise in Data Visualization and Reporting involves transforming complex datasets into clear, actionable insights using tools like Tableau, Power BI, and Excel. Proficiency in designing interactive dashboards, generating automated reports, and applying data storytelling techniques enhances decision-making processes. Candidates should leverage statistical analysis, attention to detail, and communication skills to present data trends effectively to diverse stakeholders.

Collaborating with Engineering Teams

Collaborating with Engineering Teams involves actively engaging in cross-functional communication to align project goals, share technical expertise, and streamline development processes. This role requires coordinating tasks, providing feedback on design and implementation, and ensuring timely delivery of quality solutions. Strong problem-solving skills and a proactive approach are essential to support innovation and drive continuous improvement within engineering workflows.

Developing Recommendation Systems

Design and implement recommendation systems leveraging machine learning algorithms to analyze user behavior and preferences. Collaborate with data scientists to optimize models for accuracy, scalability, and real-time performance across multiple platforms. Continuously evaluate system performance using relevant metrics such as precision, recall, and user engagement to enhance personalized user experiences.

Implementing Natural Language Processing (NLP) Solutions

Implementing Natural Language Processing (NLP) solutions involves designing, developing, and deploying algorithms that enable machines to understand, interpret, and generate human language effectively. This role requires proficiency in programming languages such as Python, experience with NLP libraries like spaCy or NLTK, and knowledge of machine learning frameworks to build models for tasks like sentiment analysis, entity recognition, and language translation. Candidates should also be skilled in data preprocessing, model evaluation, and integrating NLP components into scalable applications to enhance user interaction and automate text-based workflows.